The ideal product would fit 100 percent of customers.

In practice, this is almost impossible. Every customer wants slightly different features, workflows, integrations, and edge-case behavior. The more customers you have, the more divergent those needs become.

Let’s say you are building a customer service chatbot for your customers and you are providing a platform so that they can customize and monitor responses.

The traditional solution: feature flags everywhere

The usual approach is well known:

Add configuration options, add feature flags, add per-customer logic, add more abstraction layers.

This works, to a point. Most modern SaaS products are built this way. It gets harder to generalize over all the customers.

I think there is room for a better strategy.

A different approach: agent-first SaaS

Make the coding agent a core feature that improves your product.

Instead of building those feature flags, design a bunch of skills, prompts, commands, unit tests, and tools you can give to your agent to improve the code. The most important part is to let the agent reason over a feedback loop.

Keep your core product small, opinionated, and stable. Let agents like Claude Code, OpenCode, Cursor, and others improve it. At the end, your SaaS is just code, and agents are very good at producing code.

What’s interesting is that even non-technical people can get involved because they know how to prompt and report errors and non-functioning behaviors. In fact, they appear to be the best at it because they’re closer to real business. That paradigm puts the client in the loop, which is so valuable.

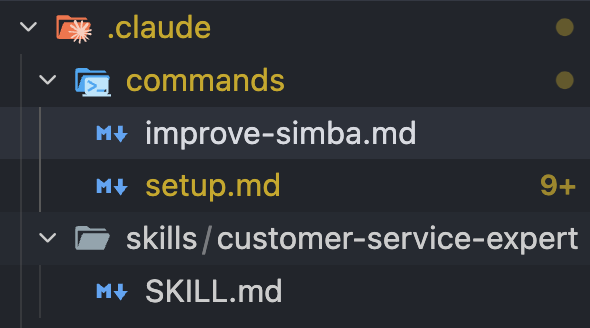

For open-source products designed this way, the main repository stays opinionated and minimal, but it comes equipped with the scaffolding that makes customization natural. The repo includes well-structured prompts, slash commands, and skills that serve as templates and extension points.

Here’s an example of drafted skills and commands to improve the product:

Prompts become the feature

If we take the customer service product example:

- One client wants a specific integration connected to custom enterprise tools for data gathering. Good: design prompts and skills that guide the coding agent to make that integration.

- Another client wants a customer service bot that focuses more on speed and tone of voice. Nice: design prompts and skills that make that possible.

Prompts become the feature. This helps you integrate not only for that customer but for all of them. Bet 100 percent on agents, at a point where agents are the wheel of your product, not humans anymore.

A new paradigm for open source

For open-source products, this paradigm is perfect because it enables engineers to customize it. If it is more accessible to customize complex software, way more people will find open-source products useful and contribute at a higher pace.

That makes me so excited.

Simba: an example of this approach in practice

To demonstrate how this works in reality, I built Simba - an open-source customer service assistant that embodies these principles.

Simba is built around one core idea: strong evals enable safe, automated customization.

Here’s a demo of the improvement loop with Claude Code being my coding agent in action:

The improvement strategy

Simba’s approach centers on a tight feedback loop between evaluation and code modification:

Continuous evaluation. You create an eval dataset of real or synthetic conversations that define correct behavior for your use case. Simba runs these evals and monitors performance continuously. Failures are detected automatically.

Structured failure reports. When evals fail, Simba produces a structured report with clear, actionable information about what broke and why - not just error messages, but context about the conversation, the expected behavior, and the deviation.

Coding agent integration. The report is exported directly to Claude Code. Claude sees the failures, the constraints, the expected behavior, and the existing code structure (prompts, tools, business logic).

Guided code updates. Claude updates prompts, tools, and logic based on this data. Because the repo is structured with clear extension points, Claude knows exactly where and how to make changes that fit the existing architecture.

Validation loop. You review the proposed changes, run them against your evals to verify improvements, and merge them into your fork.

No feature flags. No guessing. No manual prompt tweaking. The coding agent becomes directly responsible for improving the accuracy of the whole system, learning from real failures and iterating in a tight loop with your evaluation data.

If you want to integrate high-efficiency customer service on your website, check it out here: https://github.com/GitHamza0206/simba